Learn torch from a COOL application: using DL to imitate artistic style

Update: I add some more details of principle at the end of the post.

This posts will cover this cool application: given a content picture and an artwork, generate a new picture with content of the content picture and style of the artwork. The following picture is copied from their paper.

You can browse the jcjohnson/neural-style, there are plenty of demos. This one is torch implenmentation, and there are some other implenmentation in different tools. And you can also read the original paper, to understand the principle or see more pictures.

It is similar to many image reconstructions using neural network: instead of optimizing network weights, fix the weights, and do gradient descent on image input. So, what we need is how to define the loss.

First, forget about the loss. Let’s see what we can learn from their code.

- How to use VGG.

- ……Em, this is enough for a newbie like me.

So, have fun. :)

##Update:## I tried some more.

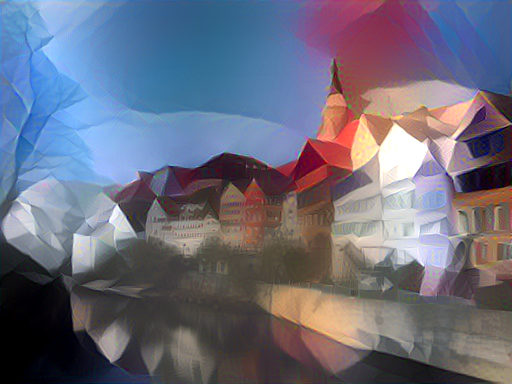

Can you tell what these two styles are. Honestly, the Mondrian one looks really bad, but you can still tell. And the low poly one works good, in my opinion.

More details in principle:

There are two kinds of reconstruction in this work. One is called content reconstruction. It tries to reconstruct the input image using the feature of original image (in different layers) and the preset network. The objective is to let the generated image and the original image have the nearest features in the same layer. So the loss function is square loss.

Another reconstruction is a little trickier, called style reconstruction. The only difference to content reconstruction is the objective. We don’t care about content of the image; we care about the style, or in another word, pattern. So, to lead to this final objective, the author defines a matrix called Gram matrix. For each layer, there is a Gram matrix, which is correlation matrix of different filter responses on this layer. That is, for each layer, we have multiple channels, for each channel, we have a filter response (either pooling or rectfication or convolution), then for each pair of filter responses, we calculate a correlation, so finally there is a square correlation matrix.

The loss is the square loss between gram matrix of generated image and original image. So, here what the author claims is that this gram matrix can capture the similarity of the style of different images.

There is an example of style reconstruction and content reconstruction in their paper.

So, how do we generate the artistic picture? For lower layers, we calculate loss of style reconstruction, and for higher layers, we calculate loss of content reconstruction. And to minimize the aggregated loss, we can generate a cool picture.